Elasticsearch settings

Contents

Here is a list of Elasticsearch settings (under elasticsearch. prefix)`:

Name |

Default value |

Documentation |

|---|---|---|

|

job name |

|

|

job name + |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Index settings

Index settings for documents

By default, FSCrawler will index your data in an index which name is

the same as the crawler name (name property). You can change it by setting index field:

name: "test"

elasticsearch:

index: "docs"

Index settings for folders

FSCrawler will also index folders in an index which name is the same as

the crawler name (name property) plus _folder suffix, like

test_folder. You can change it by setting index_folder field:

name: "test"

elasticsearch:

index_folder: "folders"

Mappings

New in version 2.10.

FSCrawler defines the following Component Templates to define the index settings and mappings:

fscrawler_alias: defines the aliasfscrawlerso you can search using this alias.fscrawler_settings_shards: defines the number of shards to use for the index.fscrawler_settings_total_fields: defines the maximum number of fields for the index.fscrawler_mapping_attributes: defines the mapping for theattributesfield.fscrawler_mapping_file: defines the mapping for thefilefield.fscrawler_mapping_path: defines an define an analyzer namedfscrawler_pathwhich uses a path hierarchy tokenizer and the mapping for thepathfield.fscrawler_mapping_attachment: defines the mapping for theattachmentfield.fscrawler_mapping_content: defines the mapping for thecontentfield.fscrawler_mapping_meta: defines the mapping for themetafield.

You can see the content of those templates by running:

GET _component_template/fscrawler*

Then, FSCrawler applies those templates to the indices being created.

You can stop FSCrawler creating/updating the index templates for you

by setting push_templates to false:

name: "test"

elasticsearch:

push_templates: false

If you want to know what are the component templates and index templates that will be created, you can get them from the source.

Creating your own mapping (analyzers)

If you want to define your own index settings and mapping to set analyzers for example, you can update the needed component template before starting the FSCrawler.

The following example uses a french analyzer to index the

content field.

PUT _component_template/fscrawler_mapping_content

{

"template": {

"mappings": {

"properties": {

"content": {

"type": "text",

"analyzer": "french"

}

}

}

}

}

Replace existing mapping

Unfortunately you can not change the mapping on existing data. Therefore, you’ll need first to remove existing index, which means remove all existing data, and then restart FSCrawler with the new mapping.

You might to try elasticsearch Reindex API though.

Bulk settings

FSCrawler is using bulks to send data to elasticsearch. By default the

bulk is executed every 100 operations or every 5 seconds or every 10 megabytes. You can change

default settings using bulk_size, byte_size and flush_interval:

name: "test"

elasticsearch:

bulk_size: 1000

byte_size: "500kb"

flush_interval: "2s"

Tip

Elasticsearch has a default limit of 100mb per HTTP request as per

elasticsearch HTTP Module

documentation.

Which means that if you are indexing a massive bulk of documents, you

might hit that limit and FSCrawler will throw an error like

entity content is too long [xxx] for the configured buffer limit [104857600].

You can either change this limit on elasticsearch side by setting

http.max_content_length to a higher value but please be aware that

this will consume much more memory on elasticsearch side.

Or you can decrease the bulk_size or byte_size setting to a smaller value.

Using Ingest Node Pipeline

New in version 2.2.

If you are using an elasticsearch cluster running a 5.0 or superior version, you can use an Ingest Node pipeline to transform documents sent by FSCrawler before they are actually indexed.

For example, if you have the following pipeline:

PUT _ingest/pipeline/fscrawler

{

"description" : "fscrawler pipeline",

"processors" : [

{

"set" : {

"field": "foo",

"value": "bar"

}

}

]

}

In FSCrawler settings, set the elasticsearch.pipeline option:

name: "test"

elasticsearch:

pipeline: "fscrawler"

Note

Folder objects are not sent through the pipeline as they are more internal objects.

Node settings

FSCrawler is using elasticsearch REST layer to send data to your

running cluster. By default, it connects to https://127.0.0.1:9200

which is the default when running a local node on your machine.

Note that using https requires SSL Configuration set up.

For more information, read SSL Configuration.

Of course, in production, you would probably change this and connect to a production cluster:

name: "test"

elasticsearch:

nodes:

- url: "https://mynode1.mycompany.com:9200"

If you are using Elasticsearch service by Elastic,

you can just use the Cloud ID which is available in the Cloud Console and paste it:

name: "test"

elasticsearch:

nodes:

- cloud_id: "fscrawler:ZXVyb3BlLXdlc3QxLmdjcC5jbG91ZC5lcy5pbyQxZDFlYTk5Njg4Nzc0NWE2YTJiN2NiNzkzMTUzNDhhMyQyOTk1MDI3MzZmZGQ0OTI5OTE5M2UzNjdlOTk3ZmU3Nw=="

This ID will be used to automatically generate the right host, port and scheme.

Hint

In the context of Elasticsearch service by Elastic, you will most likely need to provide as well the username and the password. See Using Credentials (Security).

You can define multiple nodes:

name: "test"

elasticsearch:

nodes:

- url: "https://mynode1.mycompany.com:9200"

- url: "https://mynode2.mycompany.com:9200"

- url: "https://mynode3.mycompany.com:9200"

Note

New in version 2.2: you can use HTTPS instead of HTTP.

name: "test"

elasticsearch:

nodes:

- url: "https://CLUSTERID.eu-west-1.aws.found.io:9243"

For more information, read SSL Configuration.

Path prefix

New in version 2.7: If your elasticsearch is running behind a proxy with url rewriting,

you might have to specify a path prefix. This can be done with path_prefix setting:

name: "test"

elasticsearch:

nodes:

- url: "http://mynode1.mycompany.com:9200"

path_prefix: "/path/to/elasticsearch"

Note

The same path_prefix applies to all nodes.

Using Credentials (Security)

If you have a secured cluster, you can use several methods to connect to it:

Basic Authentication (not recommended / deprecated)

API Key

New in version 2.10.

Let’s create an API Key named fscrawler:

POST /_security/api_key

{

"name": "fscrawler"

}

This gives something like:

{

"id": "VuaCfGcBCdbkQm-e5aOx",

"name": "fscrawler",

"expiration": 1544068612110,

"api_key": "ui2lp2axTNmsyakw9tvNnw",

"encoded": "VnVhQ2ZHY0JDZGJrUW0tZTVhT3g6dWkybHAyYXhUTm1zeWFrdzl0dk5udw=="

}

Then you can use the encoded API Key in FSCrawler settings:

name: "test"

elasticsearch:

api_key: "VnVhQ2ZHY0JDZGJrUW0tZTVhT3g6dWkybHAyYXhUTm1zeWFrdzl0dk5udw=="

Access Token

New in version 2.10.

Let’s create an API Key named fscrawler:

POST /_security/oauth2/token

{

"grant_type" : "client_credentials"

}

This gives something like:

{

"access_token" : "dGhpcyBpcyBub3QgYSByZWFsIHRva2VuIGJ1dCBpdCBpcyBvbmx5IHRlc3QgZGF0YS4gZG8gbm90IHRyeSB0byByZWFkIHRva2VuIQ==",

"type" : "Bearer",

"expires_in" : 1200,

"authentication" : {

"username" : "test_admin",

"roles" : [

"superuser"

],

"full_name" : null,

"email" : null,

"metadata" : { },

"enabled" : true,

"authentication_realm" : {

"name" : "file",

"type" : "file"

},

"lookup_realm" : {

"name" : "file",

"type" : "file"

},

"authentication_type" : "realm"

}

}

Then you can use the generated Access Token in FSCrawler settings:

name: "test"

elasticsearch:

access_token: "dGhpcyBpcyBub3QgYSByZWFsIHRva2VuIGJ1dCBpdCBpcyBvbmx5IHRlc3QgZGF0YS4gZG8gbm90IHRyeSB0byByZWFkIHRva2VuIQ=="

Basic Authentication (deprecated)

New in version 2.2.

The best practice is to use API Key or Access Token. But if you have no other choice, you can still use Basic Authentication.

You can provide the username and password to FSCrawler:

name: "test"

elasticsearch:

username: "elastic"

password: "changeme"

Warning

Be aware that the elasticsearch password is stored in plain text in your job setting file.

A better practice is to only set the username or pass it with

--username elastic option when starting FSCrawler.

If the password is not defined, you will be prompted when starting the job:

22:46:42,528 INFO [f.p.e.c.f.FsCrawler] Password for elastic:

User permissions

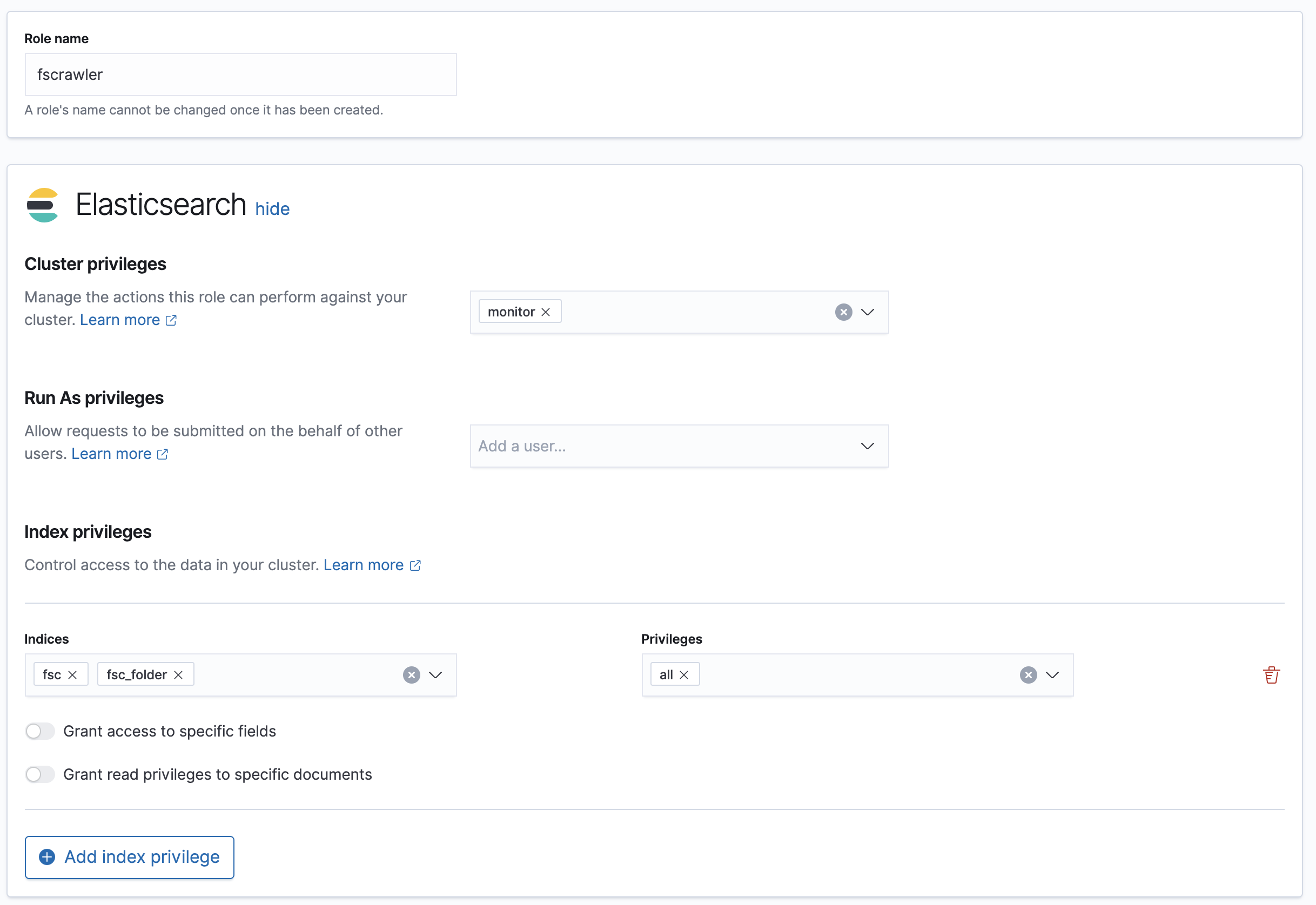

If you want to use another user than the default elastic (which is admin), you will need to give him some permissions:

cluster:monitorindices:fsc/allindices:fsc_folder/all

where fsc is the FSCrawler index name as defined in Index settings for documents.

This can be done by defining the following role:

PUT /_security/role/fscrawler

{

"cluster" : [ "monitor" ],

"indices" : [ {

"names" : [ "fsc", "fsc_folder" ],

"privileges" : [ "all" ]

} ]

}

This also can be done using the Kibana Stack Management Interface.

Then, you can assign this role to the user who will be defined within the username setting.

SSL Configuration

In order to ingest documents to Elasticsearch over HTTPS based connection, you need to perform additional configuration steps:

Important

Prerequisite: you need to have root CA chain certificate or Elasticsearch server certificate

in DER format. DER format files have a .cer extension. Certificate verification can be disabled by option ssl_verification: false

Logon to server (or client machine) where FSCrawler is running

Run:

keytool -import -alias <alias name> -keystore " <JAVA_HOME>\lib\security\cacerts" -file <Path of Elasticsearch Server certificate or Root certificate>

It will prompt you for the password. Enter the certificate password like changeit.

Make changes to FSCrawler

_settings.jsonfile to connect to your Elasticsearch server over HTTPS:

name: "test"

elasticsearch:

nodes:

- url: "https://localhost:9243"

Tip

If you can not find keytool, it probably means that you did not add your JAVA_HOME/bin directory to your path.

Generated fields

FSCrawler may create the following fields depending on configuration and available data:

For more information about meta data, please read the TikaCoreProperties.

Here is a typical JSON document generated by the crawler:

{

"content":"This is a sample text available in page 1\n\nThis second part of the text is in Page 2\n\n",

"meta":{

"author":"David Pilato",

"title":"Test Tika title",

"date":"2016-07-07T16:37:00.000+0000",

"keywords":[

"keyword1",

" keyword2"

],

"language":"en",

"description":"Comments",

"created":"2016-07-07T16:37:00.000+0000"

},

"file":{

"extension":"odt",

"content_type":"application/vnd.oasis.opendocument.text",

"created":"2018-07-30T11:35:08.000+0000",

"last_modified":"2018-07-30T11:35:08.000+0000",

"last_accessed":"2018-07-30T11:35:08.000+0000",

"indexing_date":"2018-07-30T11:35:19.781+0000",

"filesize":6236,

"filename":"test.odt",

"url":"file:///tmp/test.odt"

},

"path":{

"root":"7537e4fb47e553f110a1ec312c2537c0",

"virtual":"/test.odt",

"real":"/tmp/test.odt"

}

}

Search examples

You can use the content field to perform full-text search on

GET docs/_search

{

"query" : {

"match" : {

"content" : "the quick brown fox"

}

}

}

You can use meta fields to perform search on.

GET docs/_search

{

"query" : {

"term" : {

"file.filename" : "mydocument.pdf"

}

}

}

Or run some aggregations on top of them, like:

GET docs/_search

{

"size": 0,

"aggs": {

"by_extension": {

"terms": {

"field": "file.extension"

}

}

}

}